Hey - Coding, AI, and Self-Improvement

Leverage, judgment, and what's left when AI does the thinking

TLDR; AI doesn’t replace thinking— it tempts you to skip it. Used passively, it weakens understanding; used intentionally, it compounds it. Knowing which work deserves your attention and which can be safely outsourced is how you will preserve your edge in the coming years.

I’ve been toying with the idea of a personal blog for about a year. For me, writing is a process of discovery and a stress test for my thoughts and beliefs. Grounding my theses in facts is the fastest way to figure out if they truly hold up. Often they don’t, and I learn something new. Win-win.

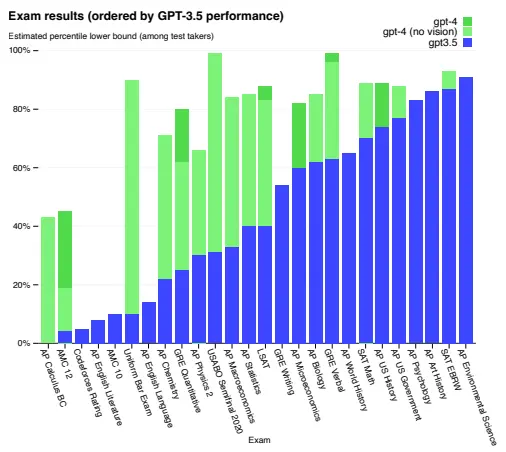

After graduating high school in 2022, I took a gap year to spend some time traveling, working, and coding. My gap year coincided with a pretty significant event: the release of the GPT-4 model in March of 2023. GPT-4 marked a milestone in LLM competence (see figure below) and was when I started using LLMs daily for writing, planning, coding, and more. This blog post is my first attempt since then to write without AI constantly looking over my shoulder.

Performance of GPT-3.5 and GPT-4 on various exams. Source:

GPT-4 Technical Report

In September of 2023, I began attending university in Canada. By that time, models had become so capable that I was able to outsource tedious homework. By GPT-4o (May 2024), LLMs were able to guide me through “relatively” complex problems, and by the release of o1 (September 2024), LLMs were allowing me to pretty much stop going to class as studying became easier and easier to do on my own.

However, around a year ago, I became increasingly frustrated by a pretty big problem.

If LLMs are doing most of the thinking for me, what is my value add?

Here’s where I’ve landed: when we use AI without critical thinking, we are eroding the edge humans still have over LLMs: our intuition, taste, and judgment. Those are earned through our experiences and mistakes. They’re also what let you ask the right questions in the first place. LLMs also can’t be held responsible for their actions like humans can. Who’s responsible when LLM-generated code causes bugs or real damage: the engineer? the company? Anthropic? Autonomous agents can’t be held accountable. But individual contributors managing agents can.

This isn’t a software-only story. Any knowledge work where AI can generate output—writing, analysis, research and more—has that same dynamic. Output will get a lot cheaper. Figuring out what matters won’t. If you don’t understand the system, you can’t tell which part is load-bearing vs. noise.

AI tools aren’t the problem. Skipping the thinking is. Stack Overflow forced you to adapt the answers to your codebase. LLMs can now do that part too. When you don’t take the time to think through something, explore the options and alternatives, and form an opinion, you have no opinion. You’re just echoing output you don’t own.

Don’t get me wrong—that’s not inherently bad. You’re exchanging understanding for speed. The risk is doing it unconsciously. I try to slow down when the system is new or foundational—new frameworks, auth providers, data model, architecture. Once I’ve thought it through (read the docs, discussed it with AI) and I’m confident the core is solid, I can let it crank through UI and simple business logic without sweating every line.

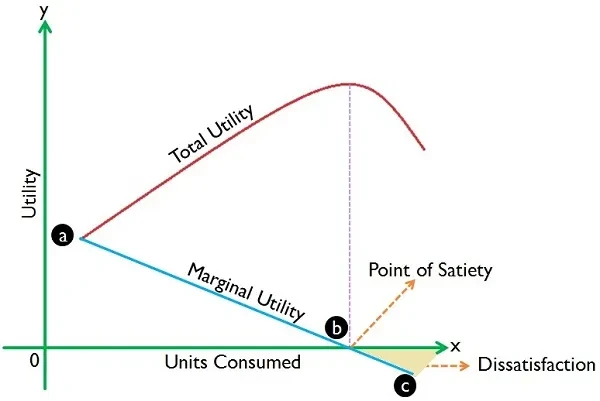

I make the tradeoff of long-term learning vs. output every day. For a lot of college work, my goal isn’t mastery, it’s passing the next project or exam. I’m optimizing to get the most returns from the steep part of the curve, well before the marginal utility of my time becomes painfully low. After I’ve finished that course, I’m fine with most of its content leaving my brain. I think that’s a rational choice, as long as I’m consciously making it and honest about the tradeoffs.

Diminishing marginal utility. Source:

StreetFins

Anthropic published a study last week titled “How AI assistance impacts the formation of coding skills”. Here are the parts I found interesting:

We found that using AI assistance led to a statistically significant decrease in mastery. On a quiz that covered concepts they’d used just a few minutes before, participants in the AI group scored 17% lower than those who coded by hand… The participants who showed stronger mastery used AI assistance not just to produce code but to build comprehension while doing so—whether by asking follow-up questions, requesting explanations, or posing conceptual questions while coding independently.

Cognitive effort—and even getting painfully stuck—is likely important for fostering mastery.

And the following footnote is becoming increasingly relevant today as “agentic coding” becomes the norm:

Importantly, this setup is different from agentic coding products like Claude Code; we expect that the impacts of such programs on skill development are likely to be more pronounced than the results here.

My two takeaways:

- AI helps comprehension only when you push back on it: ask questions, challenge the output, do the thinking.

- The feedback loop required for learning gets harder to do as tools get more autonomous. The more agentic the workflow, the easier it is to check out entirely.

While this study is specifically about coding, I’d wager the same principles apply to most knowledge work.

Anthropic is not the only company doing research into AI, learning, and code quality. CodeRabbit’s state of AI report published in December showed that AI-written code produced ~ 1.7x more issues (Grain of salt: they sell code review tools). Models have improved since then, so the quality gap has likely narrowed, but this makes the skill atrophy point more relevant than ever. Better models make it easier, not harder, to check out.

I struggle with this daily: I tell the LLM to start implementation before nailing down the specifics and planning it out. It usually bites me in the ass a couple hours or days later when I need to refactor or redesign. The addictive part is how quickly you can go from idea to implementation. It’s become easy to decide on a feature and ship it in an afternoon, but that speed makes it tempting to skip the hard questions: Is this the right approach? Is it even worth building? How do I build for the roadmap without overengineering for v1?

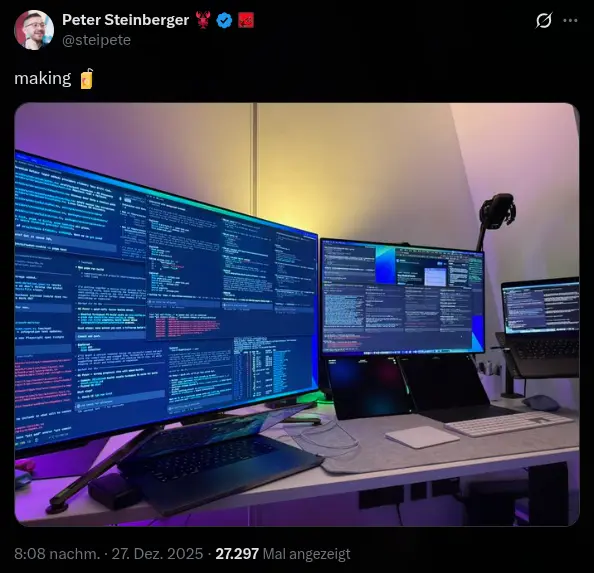

Shipping new features is fun, but testing them, thinking through edge cases, or validating their usefulness often isn’t. Taking five to ten minutes to structure my thoughts on a piece of paper, or to talk them through with someone, has helped me save hours not building the wrong features. That really matters now since AI allows one person to build faster and bigger than entire teams could 2 or 3 years ago. Peter Steinberger, the creator of OpenClaw (previously ClawdBot), has “authored” almost 35,000 commits already this year and is working on 3-8 projects simultaneously. He’s said that “agentic engineering” makes him think more, not less—mundane code is automated, but the mental load shifts to planning, switching contexts, and designing good subsystems before the agents run.

Steinberger’s coding setup,

shared on X

OpenClaw is an open-source AI assistant that runs 24/7 on your own hardware with persistent memory and integrations to calendars, emails, and more. It can message you proactively and is extensible through community-built skills. Steinberger built it in about two months. It’s gone viral in the last few weeks.

That’s the upside of leveraging AI: individual contributors can now ship at startup scale. But the same velocity that ships features can also ship vulnerabilities. Researchers found a CVSS 8.8 remote code execution vulnerability in OpenClaw last week. That vulnerability is part of the point: velocity ships bugs too. Still, OpenClaw is a great example of how fast useful software can be built and distributed today.

I don’t know what’s going to happen to software engineering. Writing code has gotten a lot easier. For me, figuring out how to build great products, how to design good architecture, and when to start planning vs. building is still difficult. Regardless, I’m trying to figure out what skills will still matter in 2 years.

This blog is part of that. So is Root, a side project that allows me to retain more of what I read and hear. I like interviews, podcasts, and books. Root is my attempt to condense those into something I can use and find again later. I’m writing as I build to hold myself accountable and document what I learn for future projects.

That’s it for now. More posts coming, not sure when.